MI300X

Leading-Edge, industry-standard accelerator module for generative AI, training, and high-performance computing.

Leading-Edge Discrete GPU for AI and HPC

The AMD Instinct™ MI300X discrete GPU is based on next-generation AMD CDNA™ 3 architecture, delivering leadership efficiency and performance for the most demanding AI and HPC applications.

It is designed with 304 high-throughput compute units, AI-specific functions including new data-type support, photo and video decoding,plus an unprecedented 192 GB of HBM3 memoryon a GPU accelerator. Using state-of-the-art die stacking and chiplettechnology in a multi-chip package propels generative AI, machine learning, and inferencing, while extending AMD leadership in HPC acceleration.

Performance Leap

The MI300X offers outstanding performance to our prior generation, offering 13.7x the peak AI/ML workload performanceusing FP8 with sparsity compared to prior AMD MI250X accelerators using FP16 and a3.4x peak advantagefor HPC workloads on FP32 calculations.

Designed to Accelerate Modern Workloads

The increasing demands of generative AI, large-language models, machine learning training, and inferencing puts next-level demands on GPU accelerators. The discrete AMD Instinct MI300X GPU delivers leadership performance with efficiency that can help organizations get more computation done within a similar power envelope compared to last-generation accelerators.

For HPC workloads, efficiency is essential, and AMD Instinct GPUs have been deployed in some of the most efficient supercomputers on the Green500 supercomputer list. These types of systems—and yours—can take advantage of a broad range of math precisions to push high-performance computing (HPC) applications to new heights.

Based on 4th Gen Infinity Architecture

The AMD Instinct MI300X is one of the first AMD CDNA 3 architecture based accelerators with high throughput based on improved AMD Matrix Core technology and highly streamlined compute units.

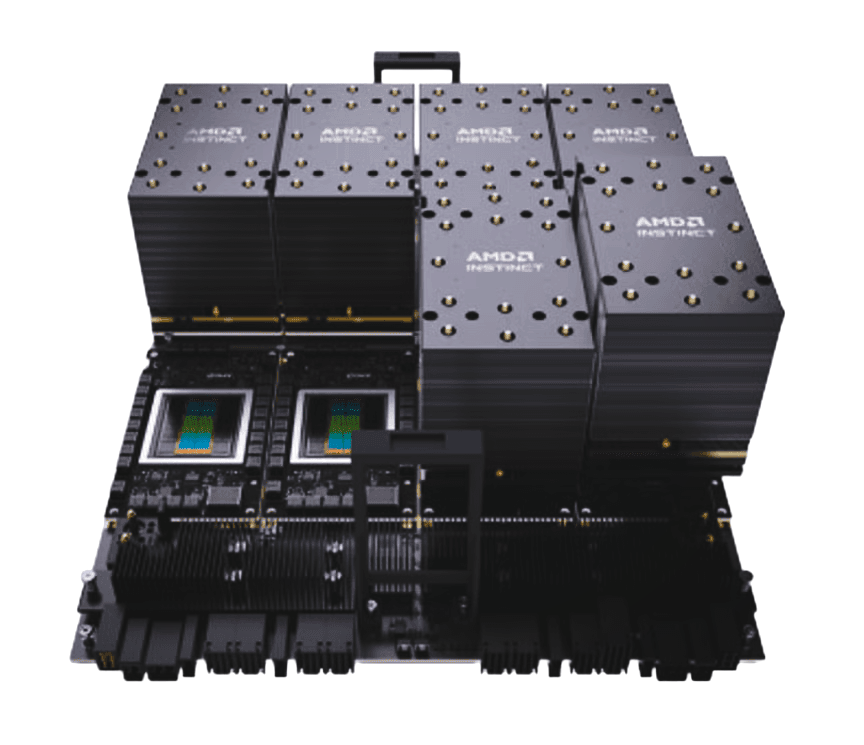

Multi-Chip Architecture

The MI300X uses state-of-the-art die stacking and chiplet technology enabling dense compute and high-bandwidth memory integration. This helps reduce data-movement overhead while enhancing power efficiency.

- 8 XCDs with 38 compute units each

- 256 MB AMD Infinity Cache™

- 4 Supported Decoders (HEVC/H.265, AV1, etc.)

Coherent Shared Memory

Machine-learning and large-language models have become highly data intensive. AMD Instinct accelerators facilitate large models with shared memory and caches.

- 192 GB HBM3 shared coherently

- 5.3 TB/s local bandwidth

- 128 GB/s bidirectional bandwidth per GPU

For more information about the AMD Instinct MI300X, the AMD Instinct MI300X Platform, and the AMD ROCm™ software platform, visit AMD.com/INSTINCT.

Ready to deploy? Get started now